Bus App, Redux

What came from the cloud, goes back to the cloud. Migrating the bus app from the server back to the cloud!

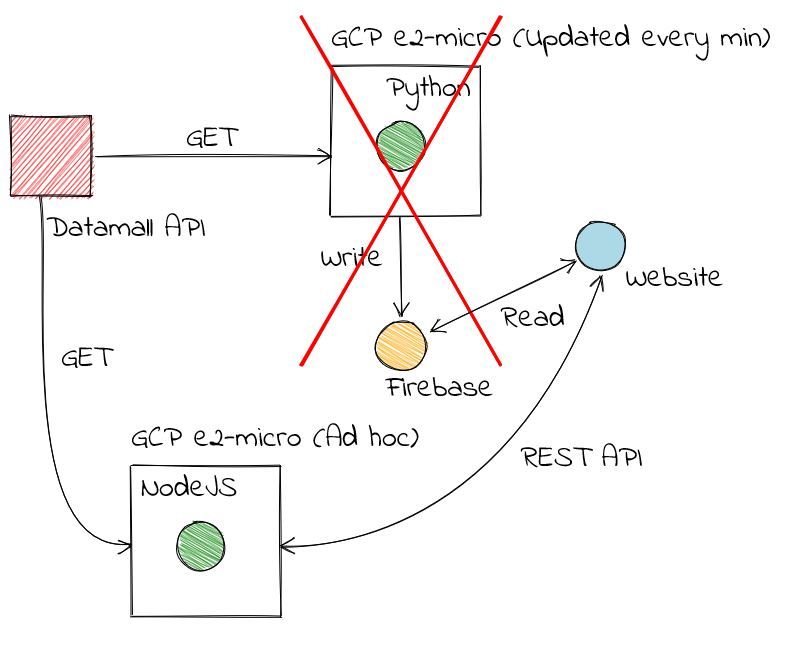

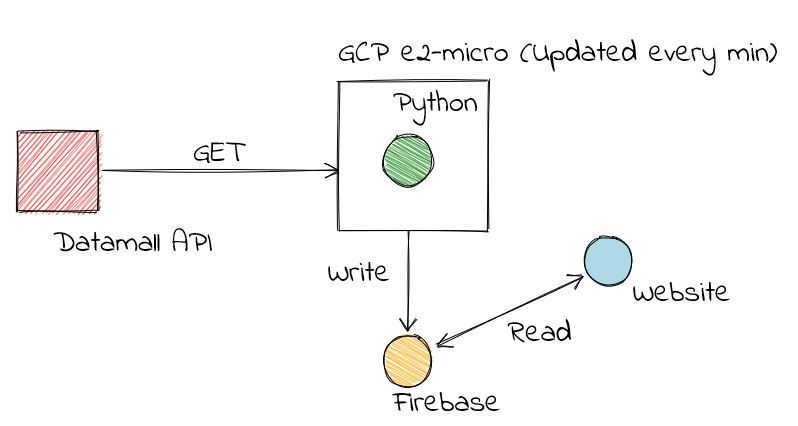

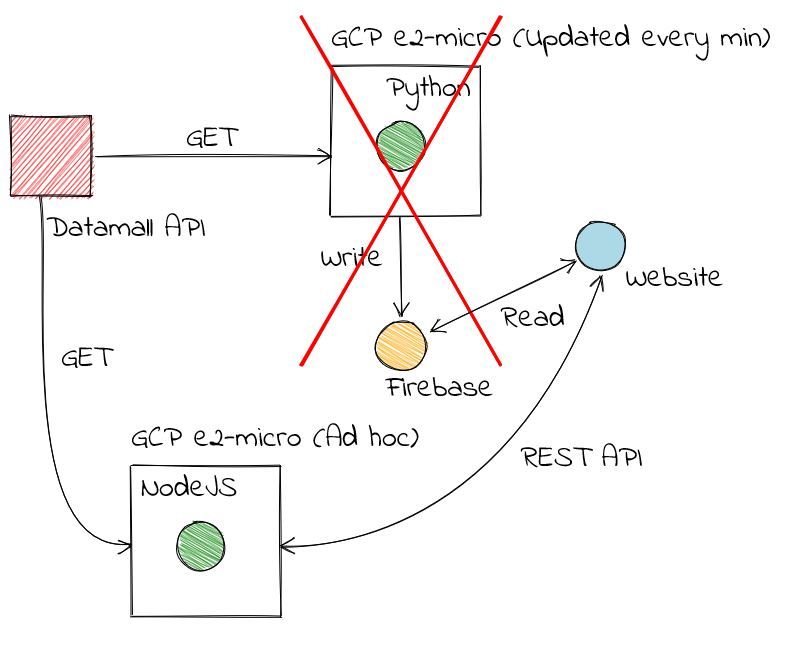

I triumphantly declared that the Cloud was simply too expensive for me in my second post about the bus app, and migrated from Google Firebase to my own hosted instance to save costs.

Yeah, about that. Turns out to be quite involved the deeper you get into it

I was happily revelling in my newfound power of managing a cheap server:

- Monthly fight clubs with bots attempting to overwhelm my SSH port. I see you 124.131.2.1, and I raise you a ban for that whole IPv4 address range.

- Trying to remember how I set things up. Wait how did I set up https again?

- Updating and maintaining the server. Look at me. I'm the sudo now.

Unfortunately, the last straw came when my preferred cloud provider sent me a nice email informing me that the prices would be raised. Again. For a second time this year. It is not a huge amount, working out to roughly the price of a coffee a month$^1$, but that represents a nearly 50% increase from my original bill.

Yes Scaleway, why? Digital Ocean and Linode both continue to offer per month pricing at reasonable rates: it was \$5/month three years ago, and it is still \$5/month now. Unfortunately, while Scaleway had the best price + performance on the market when I started out (~\$3/month), the prices have been continually rising each year.

To me, it isn't clear how monthly capping prevents Scaleway from implementing spot pricing and reserved instances. After all, it is really on how you manage billings. I would also challenge that monthly capping is not relevant. Big Cloud$^2$ allows you to rent instances if you are planning to use them continuously for a month at a lower cost per minute than if you were using the instances in an ad-hoc manner.

I think the subtext is: we're not making enough to continue providing this service, so we are raising the prices. To me that is fair enough reason. I just wish they had just put it out there.

I decided to migrate my applications off a standard server and instead focus on a whole new world of serverless applications. In a way I'm grateful for the push.

Migration

Migrating the backend code to Google Cloud Functions turned out to be relatively painless. Because the application was written in NodeJS, and since most serverless offerings can run NodeJS, all I had to do was made some minor tweaks to adapt the code:

- Remove

app.listenbecause it is now a function and there is no need to start a server. - Set

NodeJSruntime tov10in order to useasync function *used ingot. - Make the main application route more explicitly

asyncby adding theasyncandawaitkeywords when requesting the api. - Change

app.gettoapp.use, converting the function to a piece of middleware.

On the frontend, I simply had to point it to the new API address and everything worked.

The great thing about serverless functions is that there is no need to manage the security aspects other than that relating to your application. Since I am using a mostly standard NodeJS runtime, I don't need any strange configuration tweaks that might require control of the server instance. Also, since my application is not called that often, it definitely has a lower cost than running a full server instance.

This was definitely less painful than I expected.

Until I wake up to a \$200 bill$^3$.

[1] This is how I like to count costs.

[2] Big Cloud (Like Big Oil) consists of Google, Amazon Web Services (AWS) and Azure (Microsoft). 'Big Cloud' is not a thing but I'm trying to make it a thing.

[3] No cloud provider lets you set a hard limit on your spending.

Related posts