Automatically resume downloads with Curl below a certain speed

I recently ran into the problem of having a rather crappy connection and wanting to download a really large file. Often the speed would climb to the kilobyte range before falling back down to 0, where it would not recover. However, I could not spend all my time watching my download, so I cobbled together a solution to allow curl to download broken files automatically by resuming a download if it detects that it has fallen below a certain speed. The following is the code for the terminal in Linux:

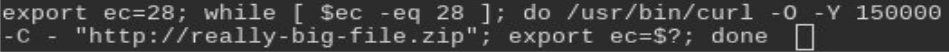

export ec=28; while [ $ec -eq 28 ]; do /usr/bin/curl -O -Y 150000 -C - "https://really-big-file.zip"; export ec=$?; done

Credit has to go to Nunez-Iglesias for writing this bash one-liner. $? gives you the last exit code in bash and Curl exits with error code 28 when it breaks off a download due to its low speed. This is specified with the -Y option and the speed to break off at is given in bytes per second. Here I broke off at the 150kb/sec mark as indicated by 150000. So as long as the last exit code is 28, keep running this command. The -C option tells Curl to continue the download where it last broke off. If you are having problems, remove this option and just run the Curl download first. When it breaks then include the code above.

This allowed me to finish my download within a reasonable time-frame behind a rather spotty internet connection. I hope it can be of help to you.